How bias can skew data sets, and what can be done to fix it

-

What is bias in data sets? What different types of bias can infiltrate my data?

-

How has bias in data sets affected other artificial intelligence (AI) ventures?

- What can be done to ameliorate the effects of bias?

AI-powered solutions, such as face recognition and person re-identification, can have huge benefits for enterprise security and public safety at large and, potentially, decrease occurrences of human-centered bias in decisions and actions made by security and law enforcement. The big data sets that these technologies are trained on, though, are not inherently neutral.

Bias, as defined by the draft of the AI Ethics Guidelines, from the European Commission’s High-Level Expert Group on Artificial Intelligence (AI HLEG) —

… is a prejudice for or against something or somebody, that may result in unfair decisions. It is known that humans are biased in their decision making. Since AI systems are designed by humans, it is possible that humans inject their bias into them, even in an unintended way. Many current AI systems are based on machine learning data-driven techniques. Therefore a predominant way to inject bias can be in the collection and selection of training data. If the training data is not inclusive and balanced enough, the system could learn to make unfair decisions. At the same time, AI can help humans to identify their biases, and assist them in making less biased decisions.

There are three common types of bias that could affect your data and, therefore, serve to propagate and empower human bias:

- Sample bias. Sample bias occurs when the data used to train your model does not accurately reflect the environment in which your model will be operating.

- Prejudice bias. Prejudice bias occurs when training data is exposed (either consciously or unconsciously) to cultural or other stereotypes.

- Measurement bias. This type of bias occurs when the instrument used to measure data has some type of distortion and does not accurately represent the environment of your data.

How has AI been used for good? Here’s one example…

{{cta(‘c9b7d98e-3cdf-4920-a3cb-44549cbb5b78’)}}

Bias in data sets can cause public embarrassment, or worse

There have already been a few notorious examples of bias infiltrating data sets in publicly humiliating or damaging ways. For instance, the American Civil Liberties Union of Northern California uncovered bias in Amazon’s facial recognition software, Rekognition, after the software incorrectly identified 28 members of Congress as potential criminals in a case of racially-based false identification. The findings sparked outrage and letters from members of Congress to Amazon demanding the halt of the use of the software. In reality, once a person is identified through the use of facial recognition, the identification should be unquestionably verified before any action is taken. So, while the misidentifications were startling, the ensuing steps that would be performed by humans would mitigate a potential critical error.

Another example of bias infiltrating data sets occurred when Microsoft allowed an AI bot to be exposed to Twitter for less than 24 hours. Tay, the AI bot, was designed to become “smarter” the more people chatted with her but within hours, Tay was responding to questions with racist comments. It would be far more interesting, and scary, if the result Microsoft experienced with Tay was due to the bot taking on its own “general intelligence” and deciding “on its own” to turn into a horribly racist bot, but, in reality, it was only doing what it was trained to do — reflect the current state of Twitter users and their language. Rather than an independently intelligent being, the bot was, essentially, an accidental mirror of humanity — in algorithmic form. Obviously, there was more programming work to be done before Tay was exposed to Twitter.

Automate Security by Augmenting Human Agency

{{cta(’25bc5a27-bf30-4349-aa22-4812f95baec8′)}}

Mitigating bias in your data sets

So, what can be done to combat the infiltration of bias in data sets? There are several different approaches you can take. Strict policies for data governance after collection can help mitigate bias. According to the AI Ethics Guidelines, “The datasets gathered inevitably contain biases, and one has to be able to prune these away before engaging in training. This may also be done in the training itself by requiring a symmetric behavior over known issues in the training set.” Developing training and policies to review data sets for potential bias spots can be an effective way to reduce bias.

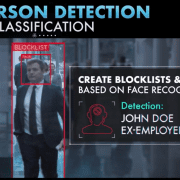

Another way to eliminate bias in data sets is to expose your AI to a wide swath of training data. Vintra has taken this task seriously by training FulcrumAI’s facial recognition on extremely diverse data sets of photographs and faces. With a wide variety of training data, FulcrumAI minimizes both sample and prejudice bias in our data, meaning that when you use our real-time facial recognition software or post-event cloud solutions, you can rest assured that you are less likely to encounter embarrassing or painful bias-driven mistakes.

Subscribe to the blog

Leave a Reply

Want to join the discussion?Feel free to contribute!